The template of the paper is the same as CVPR, but length will be limited to 4 pages including references. Accepted teams will be able to submit a paper describing their approach (Deadline 4th June 23:59 UTC). Together with the results obtained, we will decide which teams are accepted at the workshop. Right after the challenge closes (15th May) we will invite all participants to submit a short abstract (400 words maximum) of their method (Deadline 19th May 23:59 UTC).

#ADOBE CC 2020 HOW TO#

More information on how to install the Python package can be found here.

#ADOBE CC 2020 UPDATE#

Once all the interactions have been done, the server will check which is your best submission and update the leaderboard accordingly. You don't have to submit the results anywhere, the result of the interactions are logged in the server by just using the Python package that we provide. We have released a Python package to simulate the human interaction, you can find more information here.

#ADOBE CC 2020 FULL#

The scribbles are obtained interacting with the server.įeel free to train or pre-train your algorithms on any other dataset apart from DAVIS (Youtube-VOS, MS COCO, Pascal, etc.) or use the full resolution DAVIS annotations and images. Ground truth not publicly available, unlimited number of submissions. Test-Dev 2017: 30 sequences from DAVIS 2017 Semi-supervised. The scribbles for these sets can be obtained here. Train + Val: 90 sequences from DAVIS 2017 Semi-supervised.

#ADOBE CC 2020 480P#

In both cases, please use the Interactive Python package.ĭatasets (Download here, 480p resolution)

The evaluation using test-dev against the server is available during the Test 2019 period. Local evaluation of the results is available for train and val sets. If the timeout is reached before finishing the 8 interactions, the last interaction will be discarded and only the previous will be considered for evaluation. Therefore, in order to do 8 interactions, the timeout to interact with a certain sequence is computed as 30*num_obj*8. This evaluates which quality a method can obtain in 60 seconds for a sequence containing the average number of objects in test-dev of DAVIS 2017 (~3 objects).įor the challenge, the maximum number of interactions is 8 and the maximum time is 30 seconds per object for each interaction (so if there are 2 objects in a sequence, your method has 1 minute for each interaction).

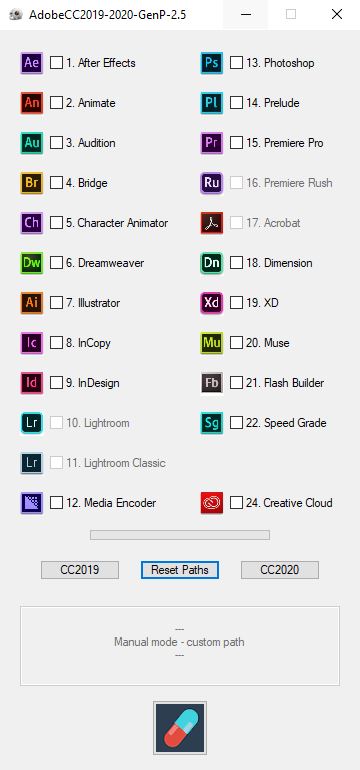

The servers register the time spent and the quality of the results, and then reply with a set of refinement scribbles. A participant connects to our servers and receives a set of scribbles, to which they should reply with a video segmentation. The main idea is that our servers simulate human interactions in the form of scribbles. Moreover, users can submit a list of frames where they would like to have the new interaction instead of considering all the frames in a video (behaviour by default), more information here. In this edition, we use J&F instead of only Jaccard. Test: 27th April 2020 23:59 UTC - 15th May 2020 23:59 UTC.Īll the participants invited to the workshop will get a subscription to Adobe CC for 1 year. More information in the DAVIS 2018 publication and the Interactive Python package documentation. Methods have to produce a segmentation mask for that object in all the frames of a video sequence taking into account all the user interactions. The interactive scenario assumes the user gives iterative refinement inputs to the algorithm, in our case in the form of a scribble, to segment the objects of interest.